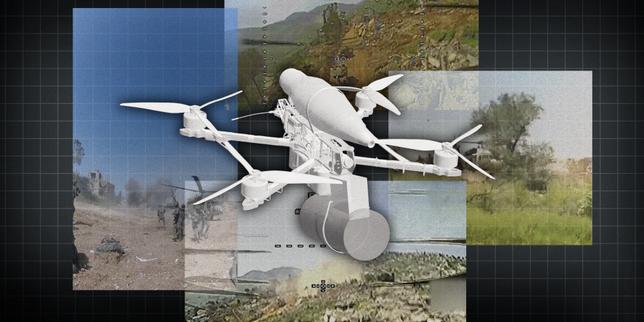

The departure of a high-ranking robotics lead from a premier AI laboratory is rarely a matter of simple career pivot; it signals a fundamental rupture in the dual-use alignment of autonomous systems. When the architect of a physical intelligence program exits citing the transition from "research for humanity" to "infrastructure for kinetic operations," it exposes a structural instability in the current AI business model. This friction arises from the collapsing distance between General Purpose AI (GPAI) and Autonomous Weapon Systems (AWS). To understand this shift, one must analyze the technical convergence of computer vision, reinforcement learning, and the inevitable demand for autonomous logistics in non-permissive environments.

The Technical Convergence of Commercial and Martial Robotics

The logic that separates "helpful home robots" from "surveillance and strike assets" is increasingly a rhetorical distinction rather than a technical one. The underlying software stack required for a robot to navigate a complex warehouse is nearly identical to the stack required for urban reconnaissance.

Three primary sub-systems drive this convergence:

- Visual Odometry and SLAM (Simultaneous Localization and Mapping): High-precision spatial awareness allows a machine to operate without GPS. While marketed for domestic vacuuming or industrial inventory, these systems are the prerequisite for operation in GPS-denied combat zones.

- Edge-Processed Computer Vision: The ability to classify objects (human vs. obstacle) in real-time. If a model can identify a "human" to avoid a collision in a kitchen, the same weights can be tuned to "track" a target in a security context.

- Reinforcement Learning (RL) for Locomotion: OpenAI’s historical success in training robotic hands and bipedal movement relies on RL. These same optimization loops are used to make quadrupedal "dogs" or drones resilient to physical interference or terrain shifts.

The exit of a robotics lead suggests that the "firewall" between these research applications has been breached. The transition of OpenAI from a non-profit-governed research entity to a partner for massive government and defense contractors changes the optimization target of the model. The cost function is no longer just "accuracy" or "safety"; it becomes "mission success."

The Economic Incentives of the Defense-Industrial Pivot

The pivot toward defense-adjacent applications is a capital requirement. Training trillion-parameter models requires liquidity that commercial consumer products (like ChatGPT) may not immediately recoup due to high inference costs. The defense sector offers high-margin, long-term contracts with lower churn than the volatile B2C market.

This creates a Strategic Lock-in. Once an AI firm accepts funding or infrastructure support linked to defense initiatives, the research roadmap shifts to accommodate "Dual-Use" requirements.

- Data Privity: Military contracts require siloed, on-premise deployments. This forces the engineering team to prioritize "small-model" efficiency and offline capabilities—features essential for a drone on a battlefield but less critical for a cloud-based assistant.

- Sensor Fusion: Defense applications demand integration with LiDAR, thermal imaging, and radio-frequency sensors. This diverts engineering talent away from natural language processing and toward "Physical AI."

The friction voiced by exiting leadership stems from the "Mission Creep" of the organizational charter. When the objective shifts from democratizing AGI to securing a nation-state's technological edge, the ethical framework of the individual contributor often hits a terminal velocity.

The Three Pillars of Kinetic AI Risk

The concern regarding "war and surveillance" is often dismissed as alarmism, but a structural analysis reveals three specific mechanisms where AI transforms from a tool into a weaponized system.

1. The Compression of the OODA Loop

The "Observe-Orient-Decide-Act" loop is the fundamental cycle of decision-making. AI shortens this loop to milliseconds. By automating the "Decide" and "Act" phases through robotics, the risk of "Flash Wars"—algorithmic escalations that occur faster than human intervention—becomes a mathematical certainty. If an OpenAI-derived model is used to manage swarm intelligence, the human is no longer "in the loop" but merely "on the loop," acting as a symbolic supervisor to an automated process.

2. The Scalability of Surveillance

Traditional surveillance is limited by human attention spans. AI-driven robotics removes this bottleneck. A robotics lead understands that the goal of "general-purpose robotics" is to create a platform. If that platform is integrated with state surveillance apparatuses, it enables a persistent, autonomous monitoring state that was previously cost-prohibitive. The "agentic" nature of new models means these systems don't just record; they investigate.

3. The Lowering of the Kinetic Threshold

The primary deterrent to kinetic conflict is the risk to human personnel. Autonomous robotics removes this barrier. By providing the software "brains" for expendable hardware, AI firms effectively lower the political and economic cost of engaging in conflict. This creates a moral hazard: when war is cheap and bloodless for the aggressor, it becomes a more frequent tool of foreign policy.

Structural Bottlenecks in Ethics Governance

The departure of key personnel highlights the failure of internal "Ethics Boards" or "Safety Committees." These bodies are often advisory and lack the power to veto revenue-generating contracts.

The misalignment between researchers and executives is a Principal-Agent Problem. The principal (the researcher) wants to advance science for broad benefit; the agent (the corporation/executives) must maximize shareholder value or secure the "compute" necessary for survival. In a high-interest-rate environment where "compute" is the new oil, the leverage lies entirely with those who can pay for the chips.

This creates a "Brain Drain" of specific talent. The engineers most capable of building autonomous systems are often those most aware of their potential for misuse. This results in a self-selection bias within the organization: those who remain are either those who agree with the defense pivot or those whose apathy is subsidized by equity.

The Strategic Realignment of Robotic Intelligence

The exit of an AI robotics chief is not an isolated HR event but a data point in the "Great Decoupling" of AI research. We are seeing the emergence of two distinct tracks for artificial intelligence:

- Open/Transparent Track: Research focused on interpretability, human-centric tools, and open-source accessibility.

- Sovereign/Kinetic Track: Research focused on "Hardened AI"—systems that are resilient, autonomous, and integrated into the national security stack.

The move by OpenAI to remove the ban on "military and warfare" use cases in its terms of service was the formalization of this transition. For a robotics expert, this change isn't just a legal tweak; it is a fundamental shift in the "Environment Variable" of their work. They are no longer building a digital assistant; they are building the nervous system for 21st-century power projection.

The immediate consequence of this leadership vacuum in the robotics division is a delay in the "embodiment" of GPT models. While the linguistic capabilities of AI continue to scale, the ability for that intelligence to interact safely and reliably with the physical world requires a level of precision and ethical oversight that is currently in flux. The "Cost of Conscience" for these firms is the loss of the very minds that made their initial breakthroughs possible.

The strategic play for competing firms is to capture this "Exiled Talent" by establishing a "Civilian-Only" research charter with verifiable, third-party audits. However, the economic gravity of defense spending makes this a difficult position to maintain. For the individual consultant or strategist, the move is to hedge against the centralization of robotic power by investing in decentralized, edge-based AI that remains under the control of the end-user rather than the platform provider. The battle is no longer about who has the best model, but who controls the "Act" phase of the OODA loop.

The only logical path forward for organizations losing top-tier talent over these issues is a formal "Disarmament of Architecture." This involves building "Air-Gapped Ethics"—hardcoding limitations into the model's weights that prevent it from functioning in specific kinetic contexts. If the industry fails to self-regulate through architectural constraints, the next phase will be a series of "Algorithmic Non-Proliferation Treaties" enforced by state actors, which will inevitably stifle commercial innovation in favor of national security interests.